- 对于 frigate 的部署,可以参考前面的文章

- codeprojectai 也可以用 deepstack 替代(后者停更好久了)

- 目前不上云的话,没有比较好的支持 Coral TPU 的离线人脸识别模型方案(或者是我没找到)

codeprojectai 虽然支持 Coral TPU,但是人脸识别模块目前暂不支持

介于上述,这套方案目前物体识别依靠 Coral TPU 可以 10+ms 出结果,但是人脸识别依旧只能依靠 NAS 上可怜的 CPU,几乎需要 1~3s 出结果~对于识别陌生人勉强算是凑合用吧~

docker-compose 文件

services:

frigate:

restart: unless-stopped

container_name: frigate

image: ghcr.io/blakeblackshear/frigate:stable

shm_size: 256m # update for your cameras based on calculation above

volumes:

- /etc/localtime:/etc/localtime:ro

- /share/Container/dockerdata/frigate/config:/config

- /share/MEDIA2/frigate_storage:/media/frigate

- type: tmpfs # Optional: 1GB of memory, reduces SSD/SD Card wear

target: /tmp/cache

tmpfs:

size: 1000000000

# ports:

# - "8971:8971" # Authenticated UI and API access without TLS. Reverse proxies should use this port.

# - "5500:5000"

# - "8554:8554" # RTSP feeds

# - "8555:8555/tcp" # WebRTC over tcp

# - "8555:8555/udp" # WebRTC over udp

environment:

- TZ=Asia/Shanghai

- FRIGATE_RTSP_PASSWORD=frigate_pwd

- LIBVA_DRIVER_NAME=i965

# privileged: true

devices:

- /dev/bus/usb:/dev/bus/usb

- /dev/dri/renderD128:/dev/dri/renderD128 # For intel hwaccel, needs to be updated for your hardware

networks:

- mynet

double-take:

container_name: double-take

image: skrashevich/double-take

restart: unless-stopped

volumes:

- /share/Container/dockerdata/double-take:/.storage

environment:

- TZ=Asia/Shanghai

# ports:

# - 3000:3000

networks:

- mynet

# deepstack:

# container_name: deepstack

# image: deepquestai/deepstack

# restart: unless-stopped

# environment:

# - TZ=Asia/Shanghai

# - VISION-FACE=True

# volumes:

# - /share/Container/dockerdata/deepstack:/datastore

## ports:

## - 5000:5000

# networks:

# - mynet

codeprojectai:

container_name: codeprojectai

image: codeproject/ai-server

restart: unless-stopped

environment:

- TZ=Asia/Shanghai

volumes:

- /share/Container/dockerdata/codeprojectai/data:/etc/codeproject/ai

- /share/Container/dockerdata/codeprojectai/modules:/app/modules

# ports:

# - 32168:32168

# devices:

# - /dev/bus/usb:/dev/bus/usb

networks:

- mynet

networks:

mynet:

external: trueCoral TPU 直接给了 frigate,如果后续 codeprojectai 人脸识别也支持 TPU 了,可以把 Coral TPU 给它统一处理所有数据!

配置文件

double-take

config.yml

# Double Take

# Learn more at https://github.com/skrashevich/double-take/#configuration

auth: true

mqtt:

host: 192.168.12.200

username: mosquitto

password: mqtt_pwd

# 这里的事件传递给 homeassistant 为后面的消息通知做准备

topics:

# mqtt topic for frigate message subscription

frigate: frigate/events

# mqtt topic for home assistant discovery subscription

homeassistant: homeassistant

# mqtt topic where matches are published by name

matches: double-take/matches

# mqtt topic where matches are published by camera name

cameras: double-take/cameras

frigate:

# https://frigate-noauth.localnas.top

url: http://frigate:5000

# 需要获取的 frigate 标签

labels:

- person

# 需要获取的摄像头名

cameras:

- door

detectors:

# deepstack:

# url: http://deepstack:5000

## key:

# # number of seconds before the request times out and is aborted

# timeout: 15

# # require opencv to find a face before processing with detector

# opencv_face_required: false

# # only process images from specific cameras, if omitted then all cameras will be processed

# cameras:

# - door

aiserver:

url: http://codeprojectai:32168

# number of seconds before the request times out and is aborted

timeout: 15

# require opencv to find a face before processing with detector

opencv_face_required: false

cameras:

- door

time:

# defaults to iso 8601 format with support for token-based formatting

# https://github.com/moment/luxon/blob/master/docs/formatting.md#table-of-tokens

format: yyyy-MM-dd hh:mm:ss

# time zone used in logs

timezone: Asia/Shanghaicodeprojectai

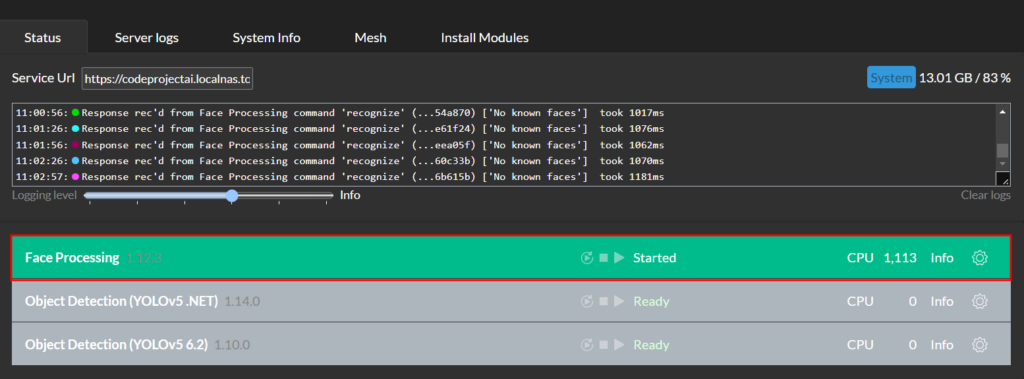

只需要开启 Face Processing 就可以了:

基本上用 CPU 识别都需要 1s 以上~

人脸识别

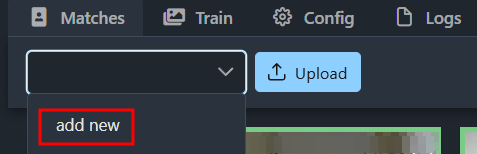

都设置完成后,去摄像头底下走一圈,就能在 double-take 界面看到一堆未识别的图片了,选中需要训练的图片,点 add new 新建一个名字添加:

后续再去摄像头底下走一圈,看看能否识别到就行了~

Comments NOTHING